Some initial thoughts on checks Martin Jansson

Introduction

In 2009 Michael Bolton had a talk at Agile 2009 from which he later on wrote about a distinction between testing and checking [1]. He has since then elaborated more in the area and digged deeper into it [2], and continue to do so [3]. By clarifying what we are doing at a certain time it makes it easier to determine what tools and heuristics to use among other things. By making this distinction I think it helps us as testers to do a better job and to better be able to explain to other non-testers why we need to do both testing and checking, but also what the difference is.

The idea that you can (and should) automate all testing is not interesting. It is like discussing automation of all decision making. In a discussion like this we can instead offer that checks are usually good to automate, but testing is not as easy since there is no true pass/fail result when it comes to open-ended information, that might have different value to different persons. James Bach makes an example how this is handled in BDD [4] or rather making it obvious that some checks might be easy to confirm while others are close to impossible.

When I talk about testing vs. checking and touch the subject about sapience [5], there are many who are known to promote the checking part as their main activity as a tester, who then feel uncomfortable. What is really important is to point out that they do exploratory testing as well [6], but perhaps not framing [7] or structuring it well. I’ve heard arguments that exploratory testing is less structured and formal, well perhaps for you it is. Why not change that?

Simon Morley makes some interesting observations in his post on Sapient checking [8] and I agree with him that it might confuse more if we talk about testing and checking when we are delivering information to inform decisions. Should the focus in our information be on if it was checking or testing being made? For most cases, I believe we should not.

Test Driven Checking

If we assume that testing and checking is intertwined, you might still consider that in some cases one thing drives the other or that at least one activity is in focus while the other is in the background.

Depending on how well I have organised my charters and missions for testing during a project I test differently. Sometimes when I run a test session (following principles from SBTM [9]) I do note taking on what tests and checks I have on the system, mixing the two activities. Afterwards I might go through a checklist and mark items off as done. This might be coverage related, requirements related or whatever was suitable to have in a checklist. I might have stored most of the information in my session notes, but I might still go into other areas to mark it there as well, if that was easily done. A college did exploratory testing, without note taking, and then went into an excel sheet to check off coverage areas. He then reported progress and coverage based on that.

To avoid missing some checks you might consider that after you have tested in an area and you are ready to move on, you take a peek at things to check in that area. By doing so you might avoid the confirmation bias [10] and unintentional blindness. You might also get new ideas for testing when seeing things that you should check.

When you do regression testing you might consider to first test around the area of the bug, then as a final step check if the reported bug is in fact fixed.

Check Driven Testing

This way of testing is probably the most common one by those who are unfamiliar with concepts of exploratory testing. As I see it, you might have a list of checks (or for some scripted tests) that you check, but you might test around them as well. In this case the checks are driving the testing. When you have one-liner test ideas as your main focus, I think it is most common that you let them drive you. So depending on how you define them you will have different focus. Simplifying it, are they “What if”-focused or “Verify that”-focused?

When you retest a fixed bug you might select the methd to check that the bug as it was reported is actually fixed, then you continue with what valuable testing around to see that nothing else has broken in the vicinity, as far as you know.

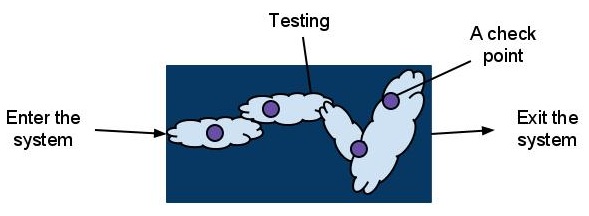

If you do a testing tour you might set out some designated targets (check points) and along the way do your testing reporting what you actually find out.

If you consider how kids might act when they walk, on their own, on one side of the street in one direction and all of a sudden they see their parents on the other side. The kid forgets everything they had learnt about traffic rules and risks, then just runs to the parents by crossing the street. I believe we can see this with testing and checks as well. When you get to the point where you can do the check, you forget all other things that you should really be testing, thus a case of unintentional blindness.

Conclusion

This is just one way of looking at it, this is no real conclusion. For me it helps to make a distinction between doing Test Driven Checking and Check Driven Testing. By being aware of the distinction you might plan your testing differently, you might consider a bit more what where you put your checks and where you do your testing. They will affect each other and they will be drawn to each other.

Well done. Sometimes I despair a little that people will understand the difference between checking and testing (and why this distinction really matters). But, now I’ve read this, and I feel that there’s hope.

If we asked someone to give us a report on a tree, that person might report, “there are 15,000 leaves on this tree”. What would we consider problemmatic about such a report? For one thing, it tells you nothing almost about the tree. For another, it tells you nothing about the leaves either. What it might do is to indicate that the person observing the tree sees only the leaves–not the limbs, nor the branches, nor the bark, what kind of tree it is, what form it takes, whether it’s actually healthy. The report doesn’t tell you about the soil and water and air upon which the tree depends, nor does it tell you about the plants and animals and insects that participate in the tree’s ecosystem. It doesn’t tell you anything about the fruit of the tree, or the other uses that people might make of the tree.

And then some organizations tout the fact that have have 15,000 automated tests that they run on each build.

I don’t despair that people will understand the difference between testing and checking, but it might take time for us to get really good explaining what the difference is and why it’s important. And even then, it will take a while for people to catch on. Better metaphors might help.

—Michael B.

Thanks for the nice article. I am still learning my way around all these testing concepts so this is definitely helpful.

This is interesting, and I guess checking and testing are intertwined in many, complex ways.

When verifying a bug fix I always check the repro steps first, and then test around the area,

UNLESS the bug fix is part of a more major re-write, in which case I’d do the testing first, and then the checking of the bug later.

I don’t think this is just personal style, I think it has to do with the main purpose of the code change.

If it’s a “small” thing, checking is suitable as the first activity (and if I see a problem, I can stop and communicate it.)

If it’s “larger”, testing is my best bet, so I can learn and understand how to test and check it best (bug verification is a side-activity, less important.)

Thus, the order of your testing should be arranged so you can find important problems, fast.

@James, thank you.

@Michael, If we use your metaphor I would expect a report to give:

– I checked what kind of tree it was and it was an oak.

– I checked a random set of 100 leaves. 3 were made of rubber and nailed to the tree, the rest were actual leaves from an oak.

– I tested the tree as a whole and it seems to be healty, no dead limbs or any sign of rot.

– During my testing I noted that there was a hole in the tree that had been home to a squirrel, most probably.

– I checked the width and it was 2 meters wide which usually means it is 100 years old (made up result).

– During my testing I noticed that someone had tried to paste a limb from an apple tree on to it, but that experiment had failed. It did not seem to have affect the health of the tree.

– As for automation we can use a tree scan to determine the composition of the tree as well as the shape. This will naturally not give us a full report, but it will give us something.

We do want to avoid meaningless checks and instead turn them into valuable checks that give an answer to a question we would like answered.

@Rikard, I think we use Test Driven Checking and Check Driven Testing as strategies in our testing. It all depends on context and with the known pitfalls that come with it.