In search of the potato… Rikard Edgren

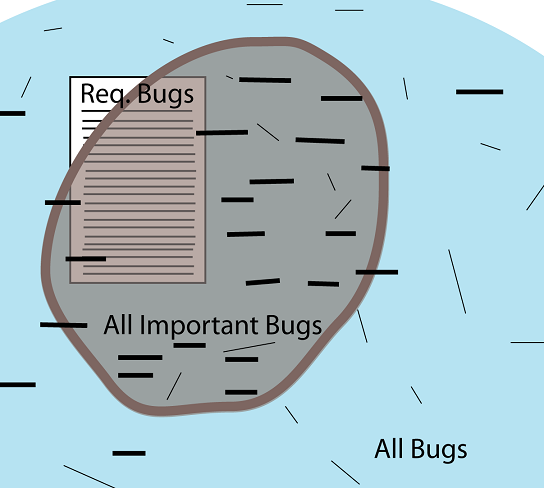

When preparing for EuroSTAR 2009 presentation I drew a picture to try to explain that you need to test a lot more than the requirements, but we don’t have to (and can’t) test everything and the qualitative dilemma is to look for and find the important bugs in the product.

Per K. instantly commented that it looked like a potato, judge for yourself:

Software Potato

The square symbolizes the features and bugs you will find with test cases stemming from requirements (that can’t and shouldn’t be complete.)

The blue area is all bugs, including things that maybe no customers would consider annoying.

The brown area is all important bugs, those bugs you’d want to find and fix.

So how do you go from the requirements to “all important bugs”?

You won’t have time to create test scripts for “everything”.

So maybe you do exploratory testing (thin lines in many directions), and hope for the best.

Or maybe you test around one-liners (thicker horizontal lines), that are more distinct, that are reviewed, and have a better chance of finding what’s important.

Either option, some part luck, and a large portion of hard work is needed.

But I think you have a much better chance if you are using one-liners, especially if it’s a larger project.

Later I have realized that one-liners aren’t essential; that this problem has been solved many times at many places with many different approaches.

What is common could be that testers learn a lot of things from many different sources, combine things, think critically and design tests (in advance or on-the-fly) that will cover the important areas.

Maybe we need a name for this method; it could be Grounded Test Design.

Hi Rikard,

Actually I see many analogies between grounded theory and exploratory testing. Both are about letting information emerge while using the data/product, rather than theoretisizing and designing upfront. They are about comparing the output of different tests, making assumptions and modyfing the next tests and the underlying model on-the-go.

So when I hear ‘grounded test design’ I immediately see it as the process of letting the tests emerge from the software, by questioning the software and adapting the approach in the process. Basically, this is exactly the same as what a sapient, holistic exploratory testing process should do.

What is your take on the difference between ‘grounded design’ and ‘exploratory testing’?

Regards,

Zeger

Zeger: very difficult question, since I’m not 100% sure of which definition of Exploratory Testing I want to use.

But let’s use the definition that Exploratory Testing is an approach.

The difference is that ET is a way to think, a style of testing that can be applied to any technique, method, activity; whereas Grounded Test Design is a technique/method/activity that could be used both in Exploratory and Scripted environments.

On the other hand scripted testing is often about verifying the requirements, which isn’t what I am talking about.

Many of the things that Exploratory Testing proponents talk about fits perfectly fine in Grounded Test Design (which don’t have anything really new, except the name…) so it could very well be a part of ET, if ET embraces reviewing and light-weight documenting in advance.

I will very soon explain more about Grounded Test Design.

Your potato is very similar to amoeba presented by Jaroslav Tulach (http://openide.netbeans.org/tutorial/test-patterns.html) – it´s about API design from developer view, but similarities can be found for testers.

Thanks for the link, Petr; the amoeba is more beautiful, but the potato is faster to draw…

There is also an important difference in content:

In the amoeba model, it is seen as a bad thing that the application isn’t identical to the specifications.

In my model it is natural (and often good!) that the requirements don’t match the aplication’s reality.