The Scripted and Exploratory Testing Continuum Henrik Emilsson

I have been using the Scripted – Exploratory Testing Continuum (For one source of this, see page 56 in http://www.ryber.se/wp-content/EssentialTestDesign.pdf ) in classes to explain how scripted testing and exploratory testing intertwines; and to explain that most of our testing is somewhere in the middle of the continuum.

But I have also had some issues with this description.

If this continuum exist, when can you say that you are doing more exploratory than scripted (and vice versa)?

How do you recognize when your testing is more exploratory than scripted (and vice versa)?

I do not believe that these questions can be answered because I think it has to do with the mindset of the person who sets the approach; and how far this particular mindset can be stretched.

The continuum is a simplified (yet useful) description of how your testing approach is a mix of the two approaches. And the simplification lies in that it can be seen as if our testing approach can be found in some point on the continuum. But I think that someone who has an exploratory testing approach is doing more or less exploratory testing; and someone who has a scripted testing approach is doing more or less scripted testing. Maybe this is how many of you already think of this?

However, I would like to visualize the differences in a slightly different way in order for me to understand this better.

Testing can be viewed from different point of views; and if I as a tester look at my testing approach I can have good use of the continuum. But the decision on which approach we should focus on is often driven by the management of the project or test team. So, from a managerial point of view, the testing approach is driven from a core dimension (interest, factor) which is more or less strict. I.e., I think that each approach can be seen as a set of continuums where each continuum consist of a certain dimension; and you will only modify these individual dimensions, not the entire approach.

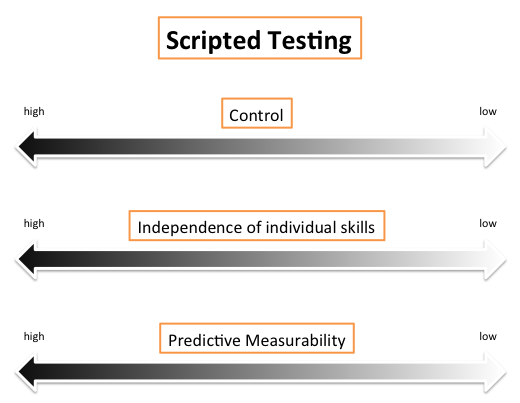

Scripted Testing Continuums (some examples)

Exploratory Testing Continuums (some examples)

Let’s say that my main interest is Control, and I believe that a scripted testing approach is the best option in order to fulfill my interest. From this, if I now gradually become less stricter when it comes to my control demand, I won’t transit into an exploratory testing approach. My approach would instead be gradually less scripted.

In the same fashion, if my main interest is trust. My approach would gradually become less exploratory, but never transit into a scripted approach.

I think that this has to do with that the approach is a mindset; or rather an ideology. And it is hard for many people to change their mindset/ideology because your approach is derived from your school of thought.

If you have a scripted approach to testing, it is because of something you strongly believe in. If you have an exploratory approach to testing, it is because of something else you strongly believe in.

Interesting thoughts. I like the reference to the management viewpoint – who, in some ways, might not care about the continuum.

One question that comes to mind: When might it be useful to describe the continuum or even find ones place on it? Is it more useful for some than others?

In some of your examples I could read them as both symptoms of test management style or of tester style/approach or of latent best practice (due to cognitive fluency). I could also read them as symptoms of the different frames that organisations and testers apply to the test project.

If a tester builds their brand they’ll establish more trust and and gain more autonomy from control. Some people/organisations may happen to have one natural default style – due to factors in their work environment, connected with who they have worked with (tested alongside), experiences, openness to new approaches, their own attitude to uncertainty and inquisitiveness – or framing and cognitive fluency problems – these could be all apart from what they think about testing (whether in terms of study or reflection.)

In some industries (e.g. mobile handsets for the handling of the air interface) there is a “scripted requirement” – GCF conformance testing. These could be viewed as “checks” – although when I was involved with this type of testing (2003-4) I loved finding faults in the tests as well as the products. So in this sense I considered I was doing “scripted testing” rather than “scripted execution” – thoughts of this (and the continuum) triggered a short discussion with Michael Bolton on the interplay of scripted and exploratory approaches, here.

To a manager/exec/stakeholder – what do they want to know? They want information to help make a go/no-go decision.

Potential for controversy (devil’s advocate time):

Why is the continuum a useful description and in what context? Your examples polarise the scripted vs exploratory approach – I could suggest that I read a bias in your description against a scripted approach 😉 But very little testing is completely scripted, so how is the continuum useful, to whom, and under what circumstances?

Recently, I’ve started to think more along the lines of not polarising views – especially for managers and testers that do not study testing (it’s not a useful approach IMHO to exclude people because they can’t, don’t want to, or are not able to study testing right now – maybe they just haven’t been reached in the right way yet -> I take that as a personal challenge to try and find better examples). Btw, I find the check vs test distinction useful as /one/ way to illustrate elements of “good testing”.

There is a lot more to think about here – on many different levels – and I’ve only touched a small portion… Thought-provoking. Thanks!

Thanks Simon!

It was my intention to not polarize the scripted vs exploratory approach, hence the selected dimensions that I thought were positive for each approach.

I have been working in environments where it has really been a key thing with independence of individual skills due to many reasons. And other projects where it has been more important (for the project manager) to be able to foresee the estimated work load instead of finding important problems.

The only thing in my post that might be polarizing is my link to the “four school”-paper.

Having said that, this visualization of the different approaches is useful for anyone who wants to understand that approaches are influenced by several factors; it is useful in order to understand what drivers that are affecting the selected approach. It is also useful for anyone of us who wants to understand more about software testing.

This kind of visualization could help explain if you ever find a testing project where everything is completely scripted. I have been working in at least one such project.

If you say that very little testing is completely scripted, which I think is true, this is useful in order to understand from where the approach comes from. If you find yourself in a project where you perform some scripted testing and some exploratory testing it is important to understand the management drivers if you want to know what they think is important.

Let’s say that you in your project do a mix of 50% scripted testing and 50% exploratory testing. In this case it is indeed a large difference if your test manager have a scripted approach or an exploratory approach.

If the approach is scripted, the exploratory testing is a support to the scripted testing. If the approach is exploratory, the scripted testing is a support to the exploratory testing.

Thanks Henrik!

You answered the question from a different perspective than I’d intended – but that was due more to the way I’d structured the question 🙂

One of the points I was getting at was that the continuum is useful to visualize /if/ you study testing – and probably study it from a certain (rang of) perspective(s). When you and I talk about the continuum we have a certain understanding of what’s involved (or not) – there’s a critical mass of previous information/learning (think what you might teach your students in term/year 2 that requires something from term/year 1).

I’m not saying this from an elitist approach – more an accessibility perspective – and then I started wondering what limitations there are with using this model – trying to explore it’s applicability and usability… Then I started thinking of a limitation due to non-study of testing.

A manager may influence the testing in ways due to the organizational latent (best) practices – a type of unknown unknowns approach (don’t realize other ways, and their relative importance and use) – that’s more a reflection on the organization and the influences it has been subject to. I’m thinking more (these days) how to tackle some of those problems – hence my line of thought.

Thanks, will continue thinking about this.

“If this continuum exist, when can you say that you are doing more exploratory than scripted (and vice versa)?

How do you recognize when your testing is more exploratory than scripted (and vice versa)?”

This is not a problem anyone really has, Henrik. The answers to these questions fall naturally out of an understanding of scripting and exploration. But the questions themselves are simply unimportant.

What *is* important is to be able to identify exploratory aspects of your testing (because we may wish to script them) and to identify scripted aspects (because we may wish to explore instead) and then to be able to evaluate the need for scripting and exploring respectively. That’s what matters. Not finding the exact place of your testing in some quantified version of the continuum.

“But I think that someone who has an exploratory testing approach is doing more or less exploratory testing; and someone who has a scripted testing approach is doing more or less scripted testing.”

These sentences aren’t saying anything. Since “exploratory testing” and the “exploratory testing approach” are exactly the same thing.

I don’t understand your other continuums. They do not seem much related either to scripted testing or exploratory testing, but in any case I can’t tell what point you are trying to make.

All good testing– scripted or exploratory– relies on skill. That’s not a differentiator between ST and ET.

“Control” means different things depending on the level at which you apply it. You could say that ET is required to assert control, or you could say ST is, depending on what you mean by that concept.

The same goes for trust.

Understanding the continuum is crucial to understanding ET. In order to understand it, it helps to work with descriptive examples. Contact me on Skype and I’ll work through some of them with you.

— James

hello, I’m a QA manager in my organization and I’m trying to introduce more exploratory testing and it is really hard to convince other groups. I was very interested by your discussion but I’d like to have more advices on how to change mindsets in an organization to go from 100% script to something more balanced. how to convince people to adopt ET approach ? how can I explain the benefits of such approach ?

Thanks

Guillaume

Hi Guillaume

Something you could consider is to use the same scripts, but while executing them, add variations and complexity, and look just outside the script instructions and expected results.

You will most probably find interesting things, and when you do, raise these findings and ask: maybe we should look for more of these things?

Another try is to ask fo an hour with a couple of testers. Use all you knowledge and skills to dig up important problems; and along with your report, ask:

Wasn’t this very well-spent time?

In general, “real stuff” are the best arguments, so another way is to talk about issues your current approach don’t find, and discuss how you could find them, timely.

Let us know how it goes!

/Rikard

thanks Rikard. I just finished to read the little black book of test design. wouah it is a sum ! top managment asked me for a presentation on ET soon. we have many groups of testers in China and India using Quality Center as the test cases management system. I hate that tool but I will follow your first advise and start from what is existing rather than reinventing the wheel. The cultural skills in India are not very compatible with ET but they have identified “real world testing” as a must have or at least something to improve.

*I was at the euro star 2008